Optimint

Optimizing micro-tasks during opportune moment

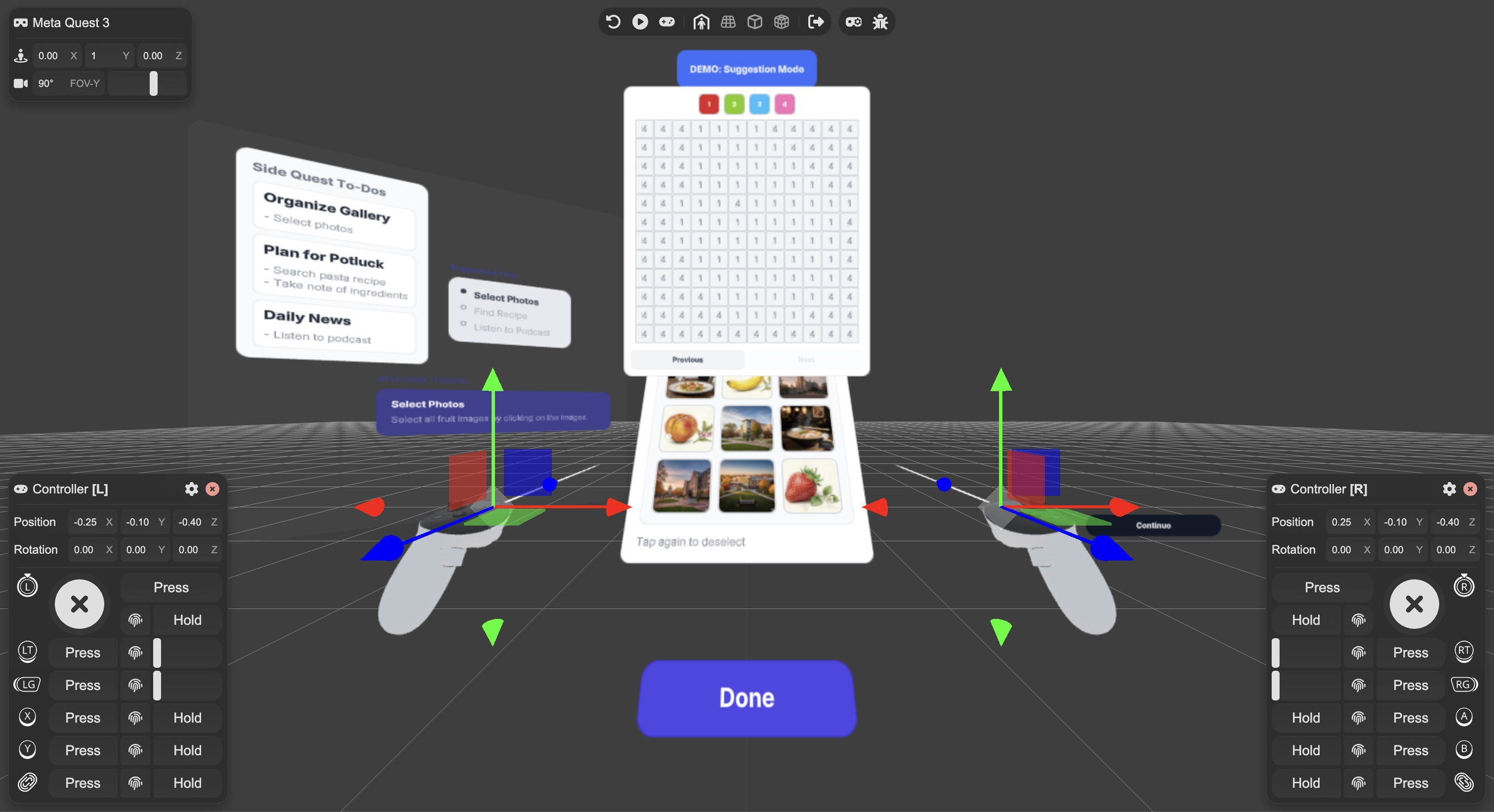

A context-aware productivity tool that computes the optimal sequence of micro-tasks across multiple workflows, maximizing task progress during fragmented moments. The research combines workflow modeling, ILP optimization, and adaptive interfaces to turn idle spare time into measurable productivity.

Research paper will be submitted to ACM IMWUT 2026 (UbiComp)

Role

Lead Researcher

Team

Hyunsung Cho (PhD)

Prof. David Lindlbauer

Timeline

May 2025 - Present

@ Augmented Perception Lab

Problem

There are numerous moments in our day, where we have spare time.

When you are commuting to work by bus or when you are waiting for the microwave to finish heating up your food, people often have trouble making good use of these scrap time and resort to scrolling. However at times when I have so many to-dos in my head and need to be productive, I want a system that suggests me side tasks I can accomplish throughout the day.

Opportunity 1

Breaking down high-level tasks takes time away

Main reason people struggle to be productive during micro-moments is because they can’t find a task they can finish within the short 1-2 minute window. Therefore, our system should atomize the high-level tasks (i.e. Plan trip to New York) into smaller units of micro-task (i.e. Find a hotel).

Opportunity 2

Context of opportunistic moments dynamically changes

User’s situational limitations, such as the duration or available interaction modality (speech, sight, auditory, hand), varies across different environment or current primary task. Therefore, our system should match the condition appropriate micro-tasks with the analyzed real-time context of the user.

Solution

From context to optimized task flow

(Note: These demo videos are from the first prototype, and the final version will be uploaded after paper submission)

Set Time Frame

Task Suggestion

UI Generation

Once the system is called, it asks the user for duration of the available time. Then it takes video input from the device and calls Gemini API to analyze the context of the user. With the context parameters set (available input and out modality to perform the task, duration, devices available, on-going/completed tasks) it suggests three most suitable task the user can perform at that given situation, using ILP. Once the user selects the task they want to perform, it will generate an appropriate UI the task can be carried out with.